In most projects a database is inevitable. Connection to a Database from Lambda is not a big challenge, but if you want to reach the internet too from the same Lambda you will face some issues.

In the previous posts, we connected to the Binance API. Our Lambda was not inside our VPC (Virtual Private Cloud) so it was easy to do so as it uses a default NAT Gateway that allows it to connect to the internet. But if it's not in our VPC where the database will be we can not connect to it. We have three options.

A VPC is a virtual network that you can configure for your needs. It's one of the foundational services of AWS and you will have to work with it sooner or later. I really suggest checking out the documentation for this.

1. Lambdas inside the VPC

If all our Lambdas are in the VPC, we can easily use all the resources there. It requires some setup (Subnets, NAT, Internet Gateway, Route Tables) to access the internet from the Lambda, and setting up network interfaces is bad for cold start time. Also, keep in mind for big and scalable projects that the number of members in the network is limited by the CIDR block you define for the VPC and subnets.

CIDR blocks are IP addresses defined by a base IP and a range.

For example: 10.192.0.0/24 means that the first 24 bit of the IP address is fixed, and only the last number can change. That means 256 different IP address.

There are 32 bits in an IPv4 address, 8-8-8-8 for each part, so 24 means the first 3 number is fixed, 16 means the first 2 is fixed, etc.

2. Lambdas outside the VPC

If all the Lambdas are outside the VPC, it's going to be faster at cold starts and there's no limitation for the number of Lambdas. But to access resources inside the VPC you need to make them public, which is not good for security.

3. Lambdas everywhere

You can have some Lambdas inside the VPC and some outside. Lambdas can call each other so if you can split the code into one that accesses the database and one that connects to the internet, it can work. It can also help with scalability, as lambdas should be small anyway and have one responsibility.

Depending on the requirements I would either go with 1 or 3. In this example, I'll show you the first option as that requires the most configuration.

Set up the VPC

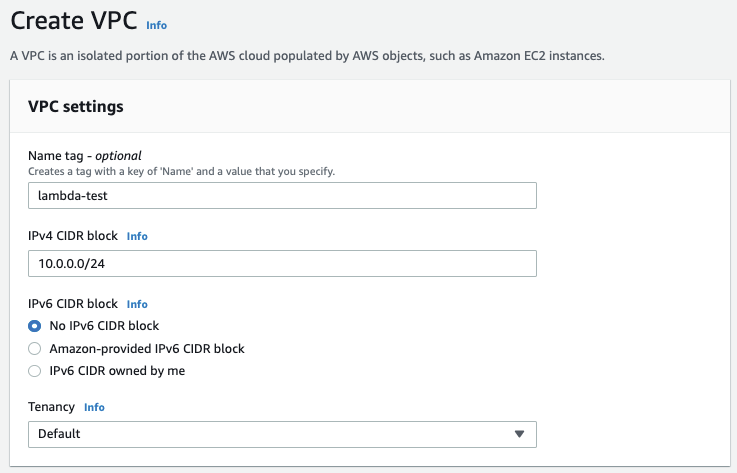

To have the Lambda and the RDS in the same network we have to assign a VPC to them. Create a new VPC.

Just add a name and a CIDR block. 10.0.0.0/16 should be more than enough for now. That's 65536 addresses and the last two numbers of the IP can be anything.

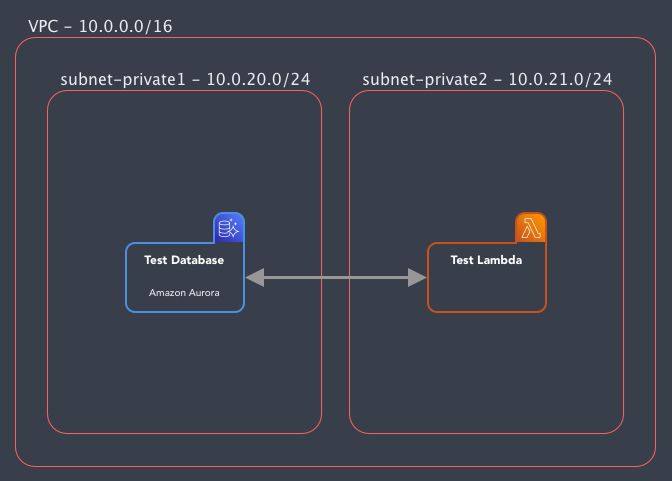

The next step is to add Subnets. Subnets are ranges inside the IP range we defined for the VPC that can have different routing setups. For example one subnet can connect to the internet through an Internet Gateway, another subnet can connect to a specific server, etc.

We will add 2 to the VPC:

lambda-test-private1: 10.0.20.0/24

lambda-test-private2: 10.0.21.0/24

Don't forget to set different Availability Zones for them as Amazon Aurora requires at least two Availability Zones.

When I first used it it always bugged my where these numbers (20, 21, 24) are coming from. So I'll just explain it real quick. We stated in the VPC that it might use any IP that starts with 10.0.x.x. And for our subnets, we'll define, that our public subnet will start with 10.0.10.x and the first 24 bits are fixes, so only the last number can be anything. Same for others. So the VPC can use any addresses, but these subnets will be only on these addresses. When we'll attach both private subnets to our Lambda, it's private IP will start with 10.0 and the next number is either 20 or 21.

And that's it! We have our own VPC set up where we can add our instances!

Setup an RDS instance

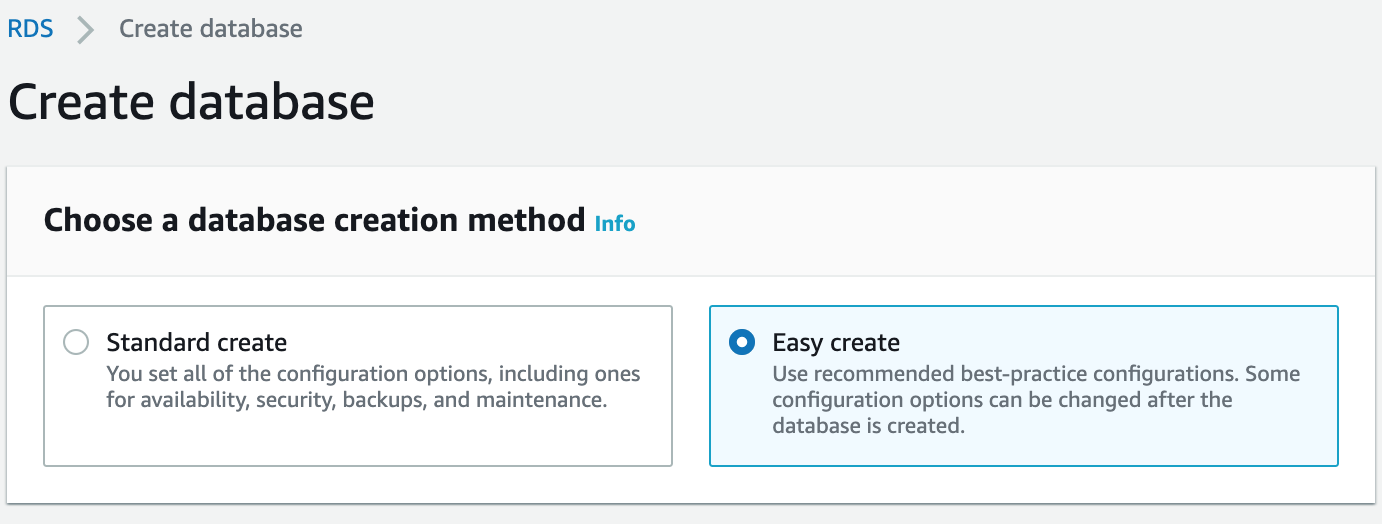

Let's start with creating an RDS database. Head to RDS and press Create an instance. You will face a simple choice at the beginning:

How sweet of Amazon! They have an easy creation for us already! This we can save some time as we don't have to set all the parameters by ourselves. But wait!

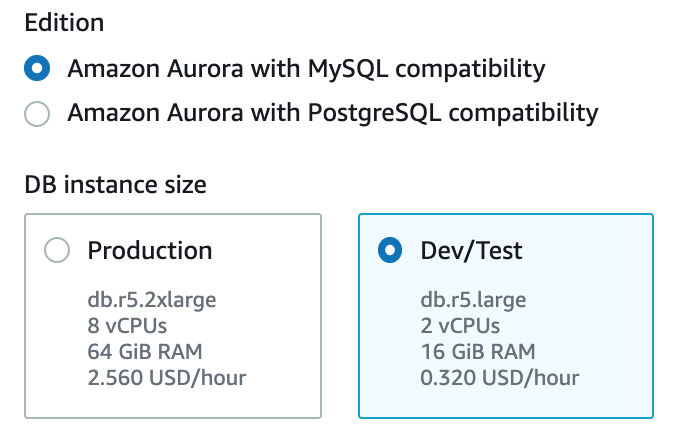

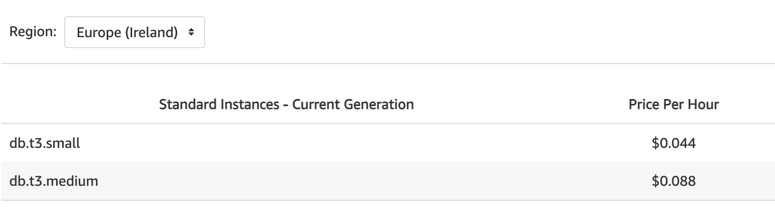

But wait! It's going to start a db.r5.large instance for us which we can't even change! Is that good for us? 0.320 USD/hour. If we run it for a day while we test that's already 7.68 USD. That's a lot for a simple test DB! It would be a lot cheaper to run an EC2 instance with MySQL on it. What's the smallest we can get?

A db.t3.small instance is just 0.044 USD/hour so it's more than 7 times cheaper. So what can we do? We have to switch from "Easy create" to "Standard create".

Amazon Aurora is a MySQL (and Postgres) compatible cloud optimized database from Amazon. They claim that it has 5x performance over a regular MySQL server and many more features.

What do we have to set?

Engine type: Amazon Aurora

Edition: Let's go with MySQL

In the Settings just add a name and a master user for your cluster

DB instance size: Dev/Test

For instance class choose the db.t3.small

Add the VPC you just created

You can just go with the default security groups (everything with the same group can access each other) but the best is if you create a new one with an inbound rule to allow the default security group for all traffic.

In the Additional settings, you can set up a default database by adding a database name. You can set a name like "test"

And you can leave the rest at default.

IMPORTANT! You will not be able to see the master password later on, so be sure to save it!

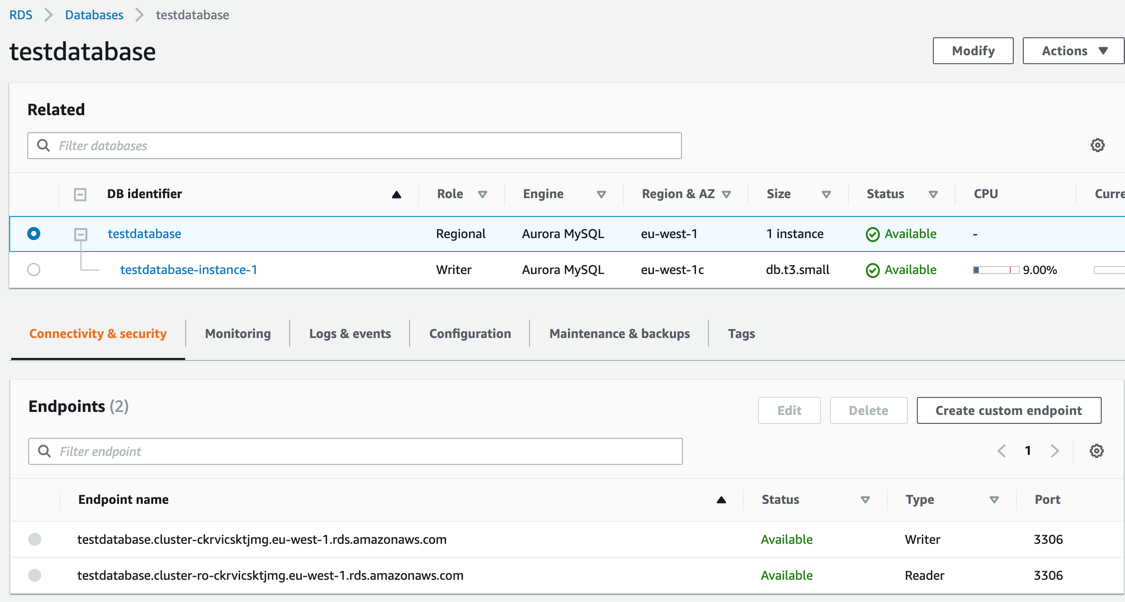

After we're done, we can see that our Database Cluster is created with one Writer instance in it. Later you can add more reader instances to it if you want to.

If you click on the Cluster you can see your database endpoints. There is a writer and a reader endpoint. If you have a service that only reads the DB or you want to connect to it from your machine to see the data, use the Reader.

Because the database was not set up public, we can't directly connect to it from our machine. For this purpose, we can set up VPN (we'll touch that later) or you can create a small EC2 instance in the VPC and give that access to the cluster, so you can use that machine as an ssh tunnel.

Some extra concepts

- You can set up Auto Scaling for Aurora to add more Reader instances if you have heavy usage

- You can also set up different instance db types for the new replicas

- You can also set up a custom endpoint to use the bigger instances if they run more complex queries

- There's also a possibility to use Serverless database to handle unpredictable workloads. It will autoscale for your needs

- There's a Multi-Master configuration for critical systems

Next time we'll set up our Lambda to connect to our new database and we'll face some VPC issues doing that!

Cover photo by Ylanite Koppens from Pexels